Success story: The Future of Soil Hidden in Data

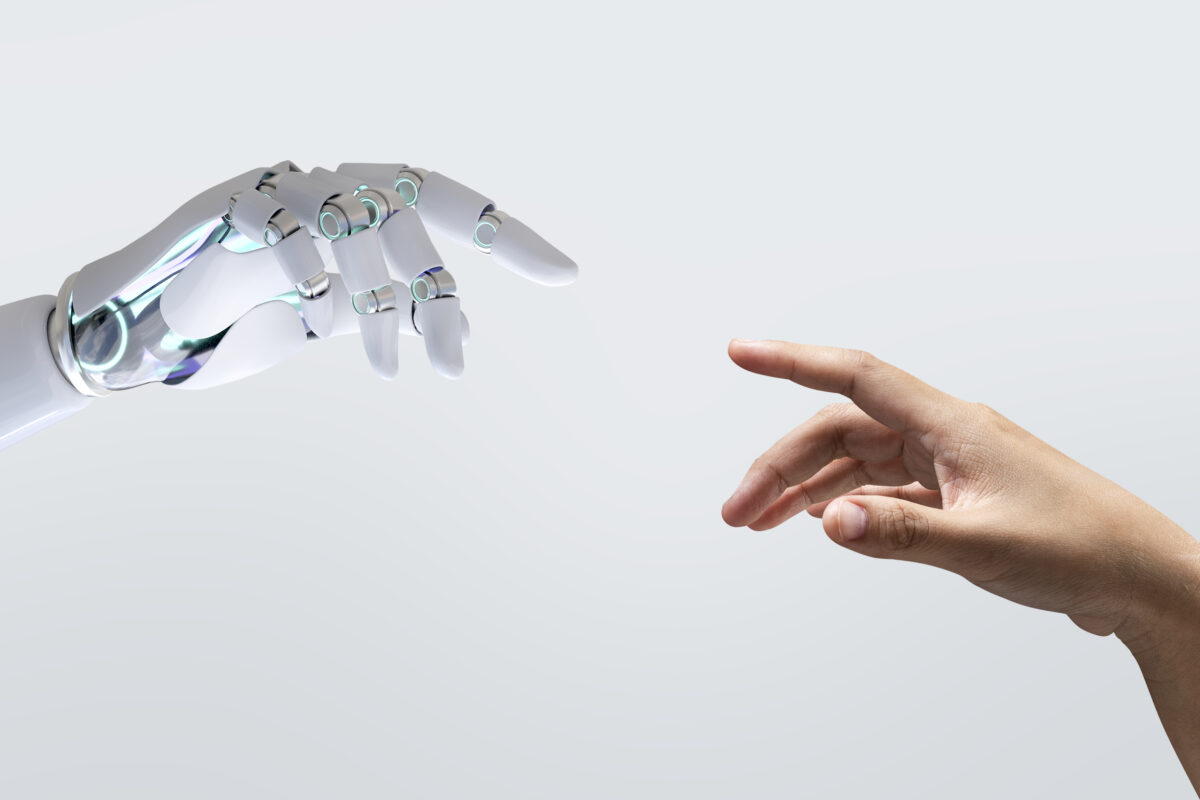

High-Performance Computing (HPC) offers researchers the ability to process enormous volumes of data and uncover connections that would otherwise remain hidden. Today, it is no longer just a tool for technical disciplines – it is increasingly valuable in social and environmental research as well. A great example is a project that harnessed the power of HPC to gain deeper insight into the relationship between humans, soil, and the landscape.

Challenge

Soil represents one of the most valuable resources we have — not only as a space for cultivation and economic activity, but also as a foundation of cultural identity, social relations, and quality of life. The way we use land is changing faster than ever before. The pressures of climate change, infrastructure development, housing demands, and renewable energy expansion are creating new tensions between economic interests, landscape protection, and the public good.

The foundation of fair and sustainable decision-making is participation — involving people in the processes that shape the land and environment they live in. However, if such processes are not well designed, they can lead to distrust, conflicts, and short-sighted solutions.

The research team from the Slovak University of Agriculture in Nitra therefore sought a way to capture, analyse, and connect these diverse perspectives. Their goal was to understand soil as a form of social and cultural capital — a space that brings together economic, environmental, and human values. To achieve this, they needed to process extensive datasets reflecting public discussions, attitudes, and values related to land and soil across the European context.

Solution

To better understand how different stakeholders perceive soil and its value, the team combined data analytics with participatory approaches. During the testing phase, they processed extensive textual data, expert documents, media outputs, and public statements that reflect societal attitudes toward soil and the landscape.

The team applied text mining methods to process the data, enabling the identification of recurring themes, linguistic patterns, and emotional attitudes related to land use. This approach opens the door to new insights, allowing researchers to derive from data how opinions are formed, where tensions arise, and what values people associate with the landscapes they inhabit.

The goal of the research is not merely to collect information, but to transform it into actionable insights that help build consensus among the public, experts, and policymakers.

Use of HPC Infrastructure

The analysis of such extensive textual data required computational power beyond the capabilities of standard workstations. Therefore, the research team used the computing infrastructure provided by NSCC Slovakia to carry out the data processing.

In the testing phase, the computations were performed on a supercomputer using 128 core*h in an R environment, enabling parallel processing of large datasets within a short time. This approach significantly reduced the analysis time while allowing the application of complex methodological frameworks typical for social and environmental data — such as modelling relationships between actors, tracking the occurrence of key concepts, and visualizing linguistic patterns.

Thanks to HPC computing, it was possible to:

- process extensive text files from various sources without capacity limitations,

- generate clear and structured data outputs that would take several times longer to produce on standard computers,

- test the potential of the supercomputer for social science and interdisciplinary research that connects human behaviour, data, and spatial relationships.

Results

The test computations confirmed that the use of high-performance computing infrastructure enables efficient processing and analysis of extensive textual data originating from various social, environmental, and cultural sources. By applying text mining methods, the team was able to gain insights into key themes and the relationships between different stakeholders involved in land-use decision-making.

The analysis revealed significant differences in how various groups perceive soil and the landscape — whether in terms of economic, ecological, or value-based priorities. These insights help identify areas where misunderstandings and conflicts arise, while also highlighting shared values that can serve as a foundation for constructive dialogue.

The research confirmed that the use of HPC infrastructure significantly improves data processing efficiency and enables complex analyses to be carried out in a timeframe that would be unfeasible with standard computing resources. This established a reliable foundation for the main phase of the project, in which the results of the testing stage will be expanded with new data sources and methodological approaches.

The obtained results represent the first step toward developing a tool capable of linking quantitative data with social contexts — thereby contributing to a deeper understanding of the relationship between people, the landscape, and decisions regarding its use.

Impact and future:

The project confirmed that a high-performance computing environment provides significant benefits for social science and environmental research dealing with complex, unstructured data. The combination of social research and computational analytics has created a new approach that can be used to gain a deeper understanding of the relationship between humans, the landscape, and societal change.

From a methodological perspective, the project serves as a model example of how HPC can support interdisciplinary research that integrates environmental data, text corpora, legislation, and public discourse. Such an approach holds great potential within European initiatives focused on sustainable land management and landscape planning.

The results thus create a transferable framework that can be applied in both European and national projects — ranging from public policy research and participatory planning to the assessment of the social impacts of environmental decisions.

Data today can tell stories that we could not have captured just a few years ago. The research team harnessed the computational power of a supercomputer to analyse vast textual datasets in order to better understand how society perceives soil, landscape, and their value. The project demonstrates that the future of soil is hidden in data — and that high-performance computing can support not only scientists but also communities striving to find balance between development and sustainability.

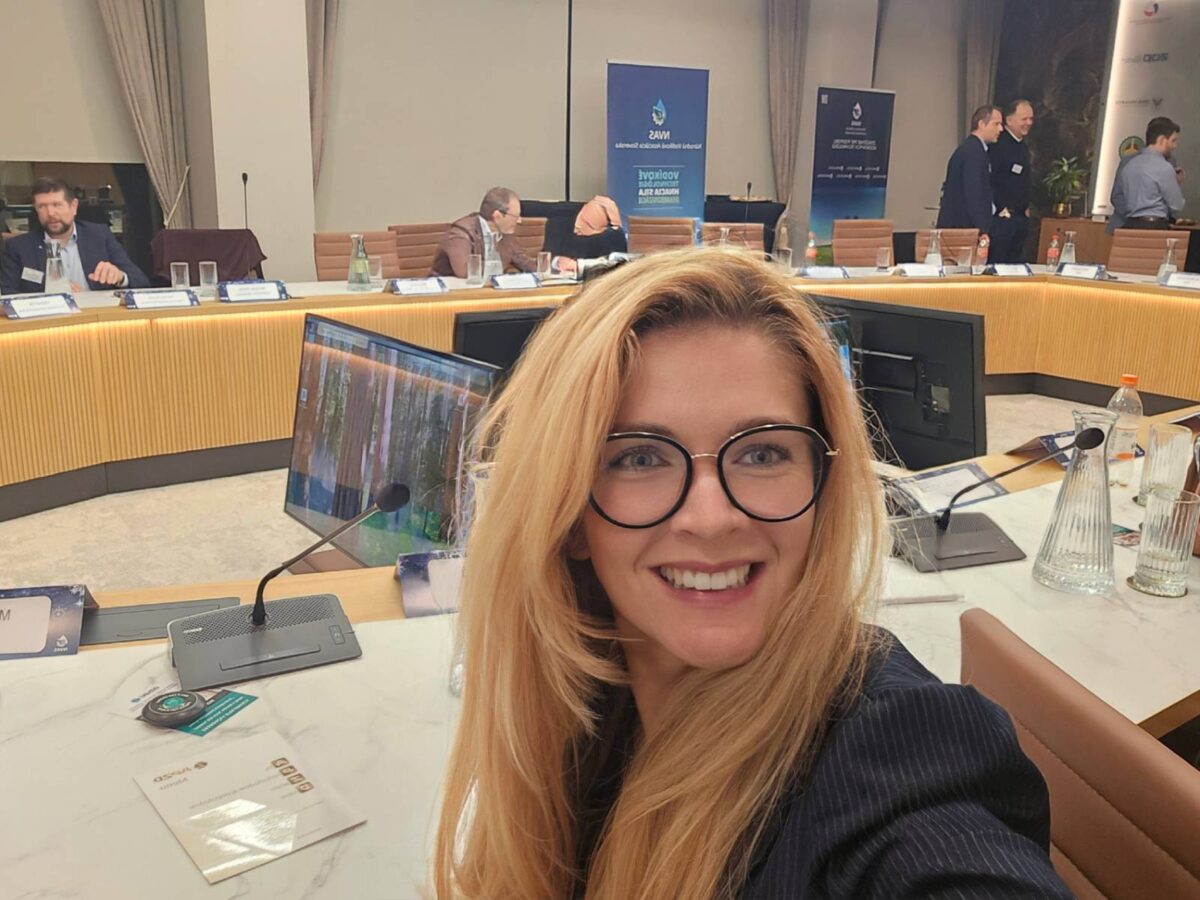

Strategický rozvoj a internacionalizácia 30 Apr - Národné superpočítačové centrum (NSCC) navštívil Jari Hämäläinen, uznávaný fínsky expert a poradca rektora Slovenskej technickej univerzity v Bratislave (STU) pre strategický rozvoj a internacionalizáciu. Stretnutie s projektovou koordinátorkou NSCC, Luciou Malíčkovou, otvorilo dvere novej úrovni spolupráce medzi akademickou sférou a špičkovou výpočtovou infraštruktúrou.

Strategický rozvoj a internacionalizácia 30 Apr - Národné superpočítačové centrum (NSCC) navštívil Jari Hämäläinen, uznávaný fínsky expert a poradca rektora Slovenskej technickej univerzity v Bratislave (STU) pre strategický rozvoj a internacionalizáciu. Stretnutie s projektovou koordinátorkou NSCC, Luciou Malíčkovou, otvorilo dvere novej úrovni spolupráce medzi akademickou sférou a špičkovou výpočtovou infraštruktúrou. Artificial Intelligence and a Supercomputer as a New Weapon Against Environmental Disasters 26 Mar - Scientists from Nitra, Slovakia are teaching machines to predict industrial failures before they can cause damage. Thanks to collaboration with the European supercomputer LUMI, they have developed a digital “guardian” capable of detecting pipeline leaks or manufacturing faults with high accuracy—helping protect both the environment and companies’ budgets.

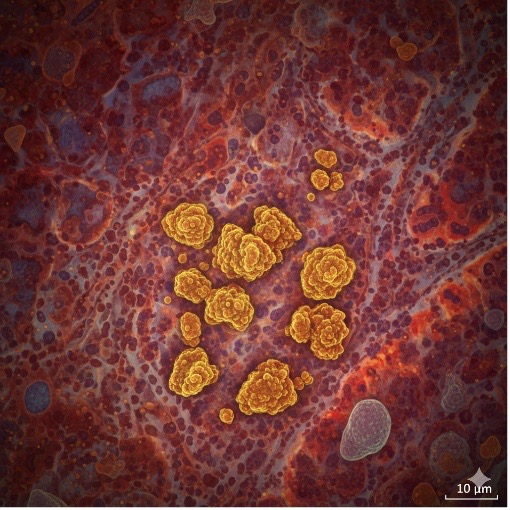

Artificial Intelligence and a Supercomputer as a New Weapon Against Environmental Disasters 26 Mar - Scientists from Nitra, Slovakia are teaching machines to predict industrial failures before they can cause damage. Thanks to collaboration with the European supercomputer LUMI, they have developed a digital “guardian” capable of detecting pipeline leaks or manufacturing faults with high accuracy—helping protect both the environment and companies’ budgets.

Chceš študovať superpočítače? 7 May - Zvažuješ magisterské štúdium a láka ťa svet vysokovýkonných výpočtov, umelej inteligencie a dátovej vedy?

Chceš študovať superpočítače? 7 May - Zvažuješ magisterské štúdium a láka ťa svet vysokovýkonných výpočtov, umelej inteligencie a dátovej vedy?  Strategický rozvoj a internacionalizácia 30 Apr - Národné superpočítačové centrum (NSCC) navštívil Jari Hämäläinen, uznávaný fínsky expert a poradca rektora Slovenskej technickej univerzity v Bratislave (STU) pre strategický rozvoj a internacionalizáciu. Stretnutie s projektovou koordinátorkou NSCC, Luciou Malíčkovou, otvorilo dvere novej úrovni spolupráce medzi akademickou sférou a špičkovou výpočtovou infraštruktúrou.

Strategický rozvoj a internacionalizácia 30 Apr - Národné superpočítačové centrum (NSCC) navštívil Jari Hämäläinen, uznávaný fínsky expert a poradca rektora Slovenskej technickej univerzity v Bratislave (STU) pre strategický rozvoj a internacionalizáciu. Stretnutie s projektovou koordinátorkou NSCC, Luciou Malíčkovou, otvorilo dvere novej úrovni spolupráce medzi akademickou sférou a špičkovou výpočtovou infraštruktúrou. Artificial Intelligence and a Supercomputer as a New Weapon Against Environmental Disasters 26 Mar - Scientists from Nitra, Slovakia are teaching machines to predict industrial failures before they can cause damage. Thanks to collaboration with the European supercomputer LUMI, they have developed a digital “guardian” capable of detecting pipeline leaks or manufacturing faults with high accuracy—helping protect both the environment and companies’ budgets.

Artificial Intelligence and a Supercomputer as a New Weapon Against Environmental Disasters 26 Mar - Scientists from Nitra, Slovakia are teaching machines to predict industrial failures before they can cause damage. Thanks to collaboration with the European supercomputer LUMI, they have developed a digital “guardian” capable of detecting pipeline leaks or manufacturing faults with high accuracy—helping protect both the environment and companies’ budgets.