Who Owns AI Inside an Organisation? — Operational Responsibility

AI Accountability Dialogue Series

AI Accountability Dialogue Series

As artificial intelligence becomes embedded in everyday organisational processes, a practical question is coming to the foreground under the EU AI Act: who actually owns AI inside an organisation? With increasing reliance on third-party providers, foundation models, and distributed internal roles, traditional notions of ownership and responsibility are no longer sufficient.

This webinar focuses on how organisations can define clear operational responsibility and ownership of AI systems in a proportionate and workable way. Drawing on hands-on experience in data protection, AI governance, and compliance, Petra Fernandes will explore governance approaches that work in practice for both SMEs and larger organisations. The session will highlight internal processes that help organisations stay in control of their AI systems over time, without creating unnecessary administrative burden.

Date and Time:

Tuesday, 3 March 2026 | 10:00 CEST (9:00 PT)

Online | Free Registration

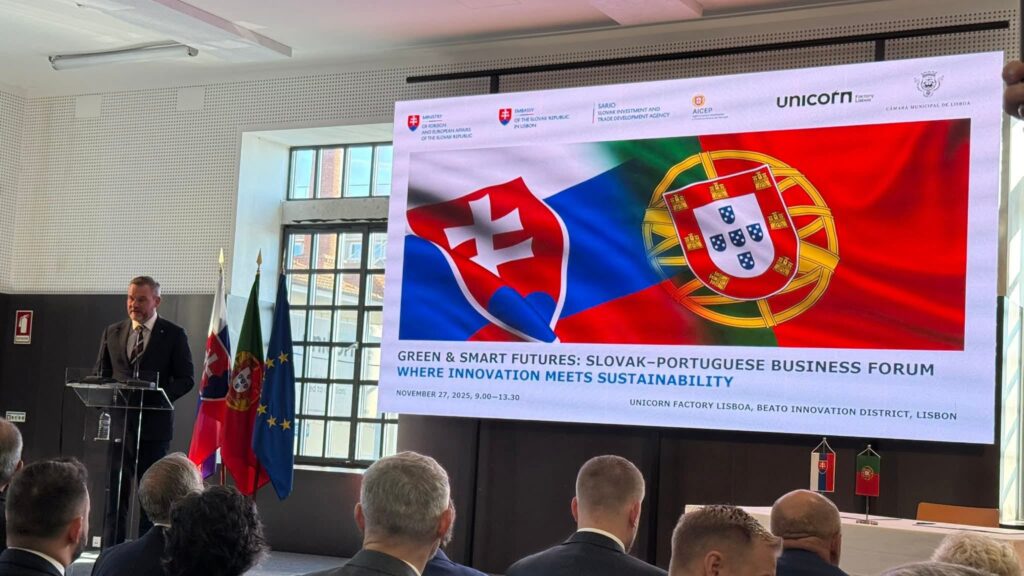

This webinar is organized by the Slovak National Supercomputing Centre as part of the EuroCC project (National Competence Centre – NCC Slovakia) in cooperation with NCC Portugal within the AI Accountability Dialogue Series.

The webinar will be held in English.

Abstract:

The EU AI Act introduces new roles and obligations that reshape how responsibility for AI systems is distributed inside organisations. In practice, however, AI ownership is often fragmented across legal, technical, compliance, data, and business functions, and further complicated by dependence on third-party and foundation models.

This webinar examines how organisations can address these challenges by distinguishing operational responsibility from operational ownership, and by clarifying decision rights and accountability across the AI system lifecycle. It discusses practical governance mechanisms aligned with organisational size and risk, including internal monitoring, documentation, and traceability of AI systems. Particular attention is given to common deployment challenges such as unclear ownership boundaries, reliance on external providers, and the emergence of informal or “shadow” AI use.

Speaker

Petra Fernandes

Lawyer – Data Protection, Artificial Intelligence & Cybersecurity

Petra Fernandes completed her Law Degree in 2003 and has since been advising clients on legal and governance matters related to data protection, artificial intelligence, and cybersecurity. She has served as a Data Protection Officer and as part of DPO teams for both private companies and public administrations.

In addition to advisory work, she regularly delivers training and awareness-raising sessions on data protection and AI governance for public and private sector organisations, with a strong focus on practical implementation and compliance.

Topics Include:

- AI ownership versus operational responsibility under the EU AI Act

- Roles and responsibilities of providers, deployers, and internal teams

- Proportional AI governance models for SMEs and large organisations

- Internal monitoring, documentation, and traceability of AI systems

- Managing ownership when using third-party and foundation models

- Addressing challenges such as shadow AI and informal AI use

Outline:

- Introduction: Why AI ownership is more than a legal issue

- The different players under the AI Act and their role in AI ownership

- Provider and Deployer roles and internal organisational responsibility

- Senior management accountability and decision-making authority

- Proportional AI governance models

- Internal monitoring and documentation

- Mapping AI systems and use cases

- Embedding responsibility into procurement and development

- Challenges in real AI deployments

- Fragmented ownership and unclear decision rights

- Dependence on third-party and foundation models

- Shadow AI and evolving systems

- Key priorities for establishing clear AI ownership

- Discussion and Q&A

Strategický rozvoj a internacionalizácia 30 Apr - Národné superpočítačové centrum (NSCC) navštívil Jari Hämäläinen, uznávaný fínsky expert a poradca rektora Slovenskej technickej univerzity v Bratislave (STU) pre strategický rozvoj a internacionalizáciu. Stretnutie s projektovou koordinátorkou NSCC, Luciou Malíčkovou, otvorilo dvere novej úrovni spolupráce medzi akademickou sférou a špičkovou výpočtovou infraštruktúrou.

Strategický rozvoj a internacionalizácia 30 Apr - Národné superpočítačové centrum (NSCC) navštívil Jari Hämäläinen, uznávaný fínsky expert a poradca rektora Slovenskej technickej univerzity v Bratislave (STU) pre strategický rozvoj a internacionalizáciu. Stretnutie s projektovou koordinátorkou NSCC, Luciou Malíčkovou, otvorilo dvere novej úrovni spolupráce medzi akademickou sférou a špičkovou výpočtovou infraštruktúrou. Artificial Intelligence and a Supercomputer as a New Weapon Against Environmental Disasters 26 Mar - Scientists from Nitra, Slovakia are teaching machines to predict industrial failures before they can cause damage. Thanks to collaboration with the European supercomputer LUMI, they have developed a digital “guardian” capable of detecting pipeline leaks or manufacturing faults with high accuracy—helping protect both the environment and companies’ budgets.

Artificial Intelligence and a Supercomputer as a New Weapon Against Environmental Disasters 26 Mar - Scientists from Nitra, Slovakia are teaching machines to predict industrial failures before they can cause damage. Thanks to collaboration with the European supercomputer LUMI, they have developed a digital “guardian” capable of detecting pipeline leaks or manufacturing faults with high accuracy—helping protect both the environment and companies’ budgets. The Slovak Recipe for Fair Play and Happier Players 25 Mar - Do you play games on your phone and sometimes feel like the game just doesn’t understand you? Experts from Nitra, Slovakia, have used one of Europe’s most powerful supercomputers to change that. Thanks to the Italian giant named Leonardo, they discovered how to read between the lines of player behavior and make the gaming experience more personal and fair.

The Slovak Recipe for Fair Play and Happier Players 25 Mar - Do you play games on your phone and sometimes feel like the game just doesn’t understand you? Experts from Nitra, Slovakia, have used one of Europe’s most powerful supercomputers to change that. Thanks to the Italian giant named Leonardo, they discovered how to read between the lines of player behavior and make the gaming experience more personal and fair.